SEO

AI Content Detection Tool

Checking AI detection score, please wait...

The Ultimate Guide to RM Digital’s AI Content Detector Tool

In the rapidly evolving world of digital content, ensuring the authenticity and originality of written material has become increasingly crucial. With the rise of artificial intelligence (AI) and its application in content generation, distinguishing between human-written and AI-generated text can be a daunting task. This is where RM Digital’s AI Content Detector Tool comes into play. As a cutting-edge solution, this tool harnesses the power of advanced algorithms and natural language processing (NLP) to help users identify AI-generated content with unparalleled accuracy.

In this comprehensive guide, we will delve into the intricacies of RM Digital’s AI Content Detector Tool, exploring its features, benefits, and the technology that powers it. Whether you are a content creator, marketer, or simply someone who values the integrity of written content, this article will provide you with the knowledge and insights necessary to leverage this remarkable tool effectively.

What is RM Digital’s AI Content Detector Tool?

RM Digital’s AI Content Detector Tool is a sophisticated software solution designed to analyze and identify text that has been generated by artificial intelligence. By employing state-of-the-art machine learning algorithms and natural language processing techniques, this tool can accurately distinguish between human-written and AI-generated content.

The tool works by examining various linguistic patterns, syntactic structures, and semantic elements within a given piece of text. It looks for telltale signs that are characteristic of AI-generated content, such as:

- Lack of coherence and logical flow

- Inconsistent writing style

- Unnatural or repetitive phrasing

- Absence of personal anecdotes or experiences

- Overuse of certain words or phrases

By analyzing these factors, RM Digital’s AI Content Detector Tool can provide users with a reliable assessment of whether a particular piece of content was created by a human or generated by an AI algorithm.

Why is Detecting AI-Generated Content Important?

In today’s digital landscape, the ability to detect AI-generated content has become increasingly vital for several reasons:

-

Maintaining Content Integrity: AI-generated content can often lack the depth, nuance, and personal touch that human-written content possesses. By identifying and filtering out AI-generated text, content creators and publishers can ensure that their material remains authentic, engaging, and valuable to their audience.

-

Preventing Plagiarism: AI algorithms can generate text by scraping and combining content from various online sources. This can lead to unintentional plagiarism and copyright infringement issues. By using RM Digital’s AI Content Detector Tool, users can safeguard against the use of plagiarized or unoriginal content.

-

Ensuring SEO Compliance: Search engines, such as Google, place a high value on original and high-quality content. AI-generated text may be flagged as low-quality or spammy, which can negatively impact a website’s search engine rankings. By detecting and removing AI-generated content, website owners can maintain their SEO compliance and avoid potential penalties.

-

Enhancing User Experience: Human-written content often offers a more engaging and relatable experience for readers. By ensuring that the content on a website or platform is primarily human-generated, businesses can foster a stronger connection with their audience and build trust and credibility.

-

Maintaining Ethical Standards: The use of AI-generated content without proper disclosure can be considered deceptive and unethical. By using RM Digital’s AI Content Detector Tool, organizations can demonstrate their commitment to transparency and integrity in their content creation practices.

How RM Digital’s AI Content Detector Tool Works

RM Digital’s AI Content Detector Tool employs a combination of advanced algorithms and natural language processing techniques to analyze and identify AI-generated content. Let’s take a closer look at the key components that power this tool:

1. Machine Learning Algorithms

At the core of RM Digital’s AI Content Detector Tool are sophisticated machine learning algorithms. These algorithms have been trained on vast datasets containing both human-written and AI-generated text. By exposing the algorithms to diverse examples, they have learned to recognize the subtle patterns and characteristics that distinguish AI-generated content from human-written material.

The machine learning models used in the tool are continually updated and refined as new data becomes available. This ensures that the tool remains accurate and effective in detecting the latest advancements in AI content generation techniques.

2. Natural Language Processing (NLP)

Natural Language Processing (NLP) is a branch of artificial intelligence that focuses on the interaction between computers and human language. RM Digital’s AI Content Detector Tool leverages NLP techniques to analyze the linguistic features and structures of a given piece of text.

Some of the NLP techniques employed by the tool include:

- Tokenization: Breaking down the text into individual words or tokens for analysis.

- Part-of-Speech (POS) Tagging: Identifying the grammatical role of each word in a sentence, such as nouns, verbs, adjectives, etc.

- Named Entity Recognition (NER): Identifying and categorizing named entities, such as people, organizations, locations, etc., within the text.

- Sentiment Analysis: Determining the overall sentiment or emotion conveyed by the text, such as positive, negative, or neutral.

By applying these NLP techniques, RM Digital’s AI Content Detector Tool can gain a deep understanding of the linguistic characteristics and patterns present in the text, enabling it to make accurate assessments of its origin.

3. Contextual Analysis

In addition to analyzing individual linguistic elements, RM Digital’s AI Content Detector Tool also considers the broader context in which the text appears. This contextual analysis helps the tool to identify inconsistencies or anomalies that may indicate the presence of AI-generated content.

For example, the tool may examine factors such as:

- Topic Consistency: Assessing whether the text stays on topic and maintains a coherent flow of ideas throughout.

- Stylistic Consistency: Analyzing whether the writing style remains consistent across different sections or paragraphs of the text.

- Factual Accuracy: Checking whether the information presented in the text is accurate and aligns with established facts or sources.

By considering these contextual elements, RM Digital’s AI Content Detector Tool can provide a more comprehensive and reliable assessment of the text’s authenticity.

4. Continuous Learning and Improvement

One of the key strengths of RM Digital’s AI Content Detector Tool is its ability to continuously learn and adapt to new developments in AI content generation. As AI technologies advance and new techniques emerge, the tool’s algorithms are regularly updated and fine-tuned to ensure their effectiveness.

The tool’s machine learning models are trained on an ever-expanding dataset of human-written and AI-generated content. This ongoing training process allows the tool to stay ahead of the curve and maintain its accuracy in detecting even the most sophisticated AI-generated text.

Furthermore, RM Digital actively seeks feedback from users and incorporates their insights and experiences into the tool’s development. This collaborative approach ensures that the AI Content Detector Tool remains user-friendly, reliable, and aligned with the evolving needs of its users.

Benefits of Using RM Digital’s AI Content Detector Tool

Now that we have a better understanding of how RM Digital’s AI Content Detector Tool works, let’s explore some of the key benefits it offers to users:

-

Accuracy and Reliability: With its advanced algorithms and continuous learning capabilities, RM Digital’s AI Content Detector Tool provides highly accurate and reliable results. Users can trust the tool to identify AI-generated content with a high degree of precision, saving them time and effort in manually reviewing and verifying the authenticity of written material.

-

Efficiency and Time-Saving: Analyzing large volumes of text for AI-generated content can be a time-consuming and labor-intensive task. RM Digital’s AI Content Detector Tool automates this process, allowing users to quickly scan and assess the originality of their content. This efficiency enables users to focus their resources on other critical aspects of their work, such as content creation, editing, and distribution.

-

Scalability: Whether you are an individual content creator, a small business, or a large organization, RM Digital’s AI Content Detector Tool can scale to meet your needs. The tool can handle a wide range of content types and volumes, making it suitable for various applications and industries.

-

Integration and Compatibility: RM Digital’s AI Content Detector Tool is designed to seamlessly integrate with existing content management systems, platforms, and workflows. This compatibility ensures that users can easily incorporate the tool into their current processes without significant disruptions or learning curves.

-

Customization and Flexibility: RM Digital understands that each user may have unique requirements and preferences when it comes to content analysis. The AI Content Detector Tool offers customization options, allowing users to fine-tune the tool’s parameters and settings to better suit their specific needs. This flexibility enables users to optimize the tool’s performance and align it with their content quality standards.

-

Continuous Updates and Support: As mentioned earlier, RM Digital is committed to the ongoing development and improvement of its AI Content Detector Tool. Users can expect regular updates that enhance the tool’s accuracy, performance, and features. Additionally, RM Digital provides comprehensive support and resources to help users maximize the value of the tool and address any questions or concerns they may have.

Real-World Applications and Case Studies

To better illustrate the effectiveness and versatility of RM Digital’s AI Content Detector Tool, let’s take a look at some real-world applications and case studies:

1. Content Marketing Agency

A leading content marketing agency, specializing in creating high-quality blog posts and articles for its clients, implemented RM Digital’s AI Content Detector Tool to ensure the originality and authenticity of its content. By using the tool to scan and verify the work of its writers, the agency was able to:

- Maintain a consistent level of content quality across all clients and projects

- Identify and address instances of unintentional plagiarism or AI-generated content

- Provide clients with assurance of the originality and human authorship of the delivered content

- Streamline its content review process, saving time and resources

As a result, the content marketing agency saw an increase in client satisfaction, improved search engine rankings for its clients’ websites, and a reduction in content-related disputes or issues.

2. Academic Institution

An academic institution, concerned about the increasing prevalence of AI-generated essays and assignments submitted by students, adopted RM Digital’s AI Content Detector Tool as part of its plagiarism detection process. By integrating the tool into its learning management system, the institution was able to:

- Automatically scan student submissions for signs of AI-generated content

- Identify cases of academic dishonesty and enforce its policies on original work

- Provide students with immediate feedback on the authenticity of their submissions

- Encourage students to develop their own critical thinking and writing skills

The implementation of RM Digital’s AI Content Detector Tool helped the academic institution maintain academic integrity, foster a culture of original thought, and better prepare students for success in their future careers.

3. News and Media Organization

A reputable news and media organization, committed to delivering accurate and trustworthy information to its audience, employed RM Digital’s AI Content Detector Tool to verify the authenticity of its articles and news stories. By using the tool, the organization was able to:

- Ensure that all published content was written by human journalists and reporters

- Maintain the credibility and reputation of its brand

- Identify and prevent the spread of AI-generated misinformation or propaganda

- Provide readers with confidence in the integrity and reliability of its reporting

By leveraging RM Digital’s AI Content Detector Tool, the news and media organization strengthened its position as a trusted source of information and upheld the highest standards of journalistic ethics.

These case studies demonstrate the wide-ranging applications and benefits of RM Digital’s AI Content Detector Tool across various industries and sectors. Whether you are a content creator, educator, or media professional, this tool can help you maintain the authenticity, quality, and trustworthiness of your written content.

Frequently Asked Questions (FAQ)

-

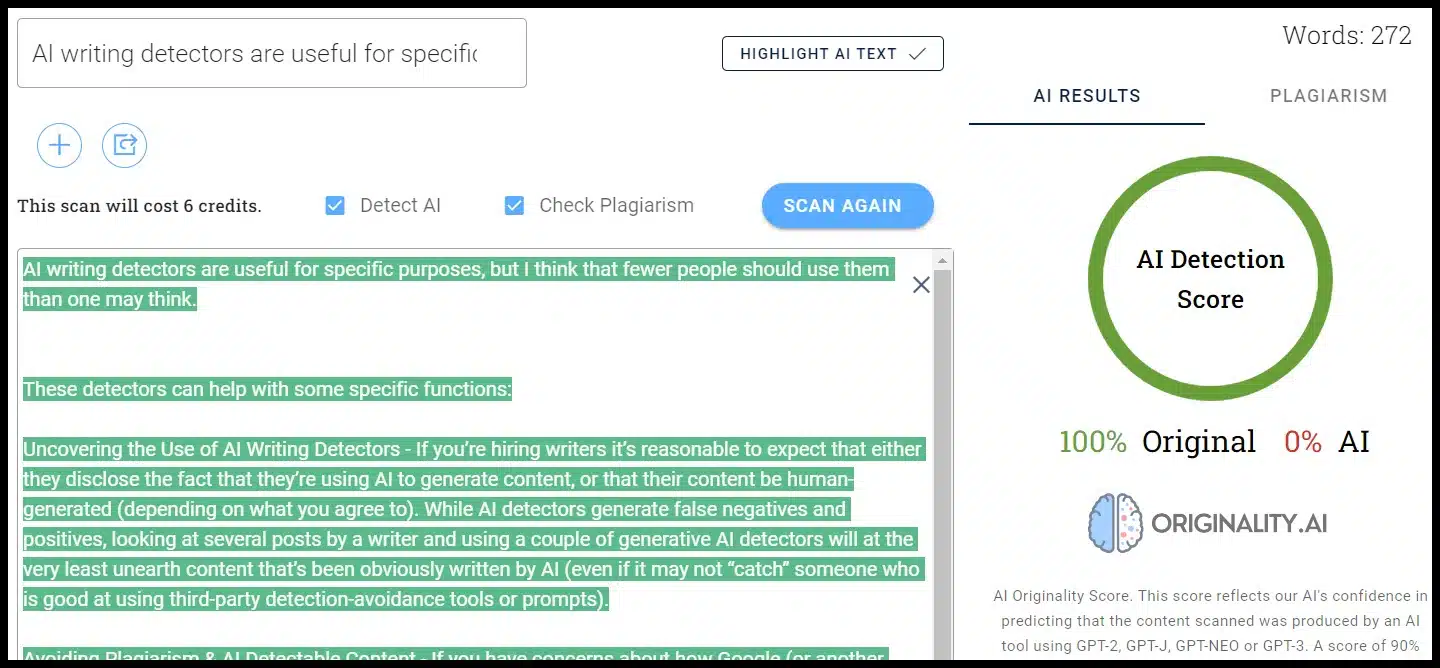

Is RM Digital’s AI Content Detector Tool 100% accurate? While RM Digital’s AI Content Detector Tool employs advanced algorithms and continuously learns to improve its accuracy, no tool can guarantee 100% accuracy in all cases. However, the tool’s high success rate and ongoing development make it one of the most reliable solutions available for detecting AI-generated content.

-

Can the tool detect AI-generated content in languages other than English? Currently, RM Digital’s AI Content Detector Tool is optimized for analyzing English language content. However, the company is actively working on expanding the tool’s language capabilities to support a wider range of languages in the future.

-

How long does it take for the tool to analyze a piece of content? The analysis time depends on the length and complexity of the text being processed. However, RM Digital’s AI Content Detector Tool is designed to provide quick and efficient results, with most analyses completed within a matter of seconds to a few minutes.

-

Is there a limit to the amount of content that can be analyzed? RM Digital offers flexible pricing plans and scalable solutions to accommodate the needs of various users. Whether you need to analyze a single document or a large corpus of text, the AI Content Detector Tool can be customized to handle your specific requirements.

-

Can the tool be integrated with my existing content management system? Yes, RM Digital’s AI Content Detector Tool is designed to be compatible with a wide range of content management systems and platforms. The company provides API access and integration support to ensure seamless incorporation into your existing workflows.

-

How often is the tool updated with new AI content detection capabilities? RM Digital is committed to staying at the forefront of AI content detection technology. The company regularly updates its AI Content Detector Tool with new features, algorithm improvements, and expanded detection capabilities to keep pace with the latest advancements in AI-generated content.

-

What should I do if I suspect that a piece of content is AI-generated? If RM Digital’s AI Content Detector Tool identifies a piece of content as potentially AI-generated, it is recommended to review the content manually and consider additional factors, such as the context and purpose of the text. If the content is determined to be AI-generated, appropriate actions, such as revision, attribution, or removal, should be taken based on your specific use case and guidelines.

-

Can I use the tool to analyze content from websites or online sources? Yes, RM Digital’s AI Content Detector Tool can be used to analyze content from various sources, including websites, online articles, and digital documents. Simply input the text into the tool or provide the URL of the content you wish to analyze.

-

Is the content analyzed by the tool kept confidential? RM Digital takes data privacy and security seriously. All content submitted to the AI Content Detector Tool is treated with the utmost confidentiality and is not shared with any third parties. The company employs strict security measures to protect user data and ensure the integrity of the analysis process.

-

How can I get started with using RM Digital’s AI Content Detector Tool? To get started with RM Digital’s AI Content Detector Tool, visit the company’s website at www.rmdigital.com. There, you can sign up for an account, choose a pricing plan that suits your needs, and access the tool’s features and resources. If you have any questions or require assistance, RM Digital’s support team is available to help you every step of the way.

Conclusion

In conclusion, RM Digital’s AI Content Detector Tool is a powerful and essential solution for anyone who values the authenticity and integrity of written content. As AI-generated text becomes increasingly sophisticated and prevalent, the ability to accurately identify and distinguish it from human-written material is crucial.

By leveraging advanced machine learning algorithms, natural language processing techniques, and continuous learning capabilities, RM Digital’s AI Content Detector Tool provides users with a reliable and efficient means of analyzing and verifying the originality of their content.

Whether you are a content creator, marketer, educator, or media professional, implementing this tool into your workflow can help you:

- Maintain the quality and credibility of your content

- Save time and resources in content review and verification processes

- Protect against plagiarism and ensure the ethical use of AI technology

- Foster trust and engagement with your audience

As we navigate the evolving landscape of AI-generated content, tools like RM Digital’s AI Content Detector will play an increasingly vital role in upholding the value and authenticity of human-created text.

Key Takeaways:

- RM Digital’s AI Content Detector Tool uses advanced algorithms and NLP to identify AI-generated content.

- The tool offers accuracy, efficiency, scalability, and customization to meet the needs of various users and industries.

- Real-world case studies demonstrate the effectiveness and versatility of the tool across different sectors.

- Regular updates and continuous learning ensure the tool stays ahead of the latest advancements in AI content generation.

- Implementing the tool can help maintain content quality, save time and resources, and foster trust with audiences.

If you would like to learn more about RM Digital’s AI Content Detector Tool and how it can benefit your organization, please visit our website at razakmusah.com or contact our friendly support team. We are here to help you navigate the world of AI-generated content and ensure the authenticity of your written material.

Other Free Tools

Product Description Tool